Large Language Models and Their Future Applications

Large Language Models (LLMs) are considered one of the most significant achievements in modern artificial intelligence. They rely on deep learning techniques and massive textual datasets to understand and generate human language in a highly sophisticated manner.

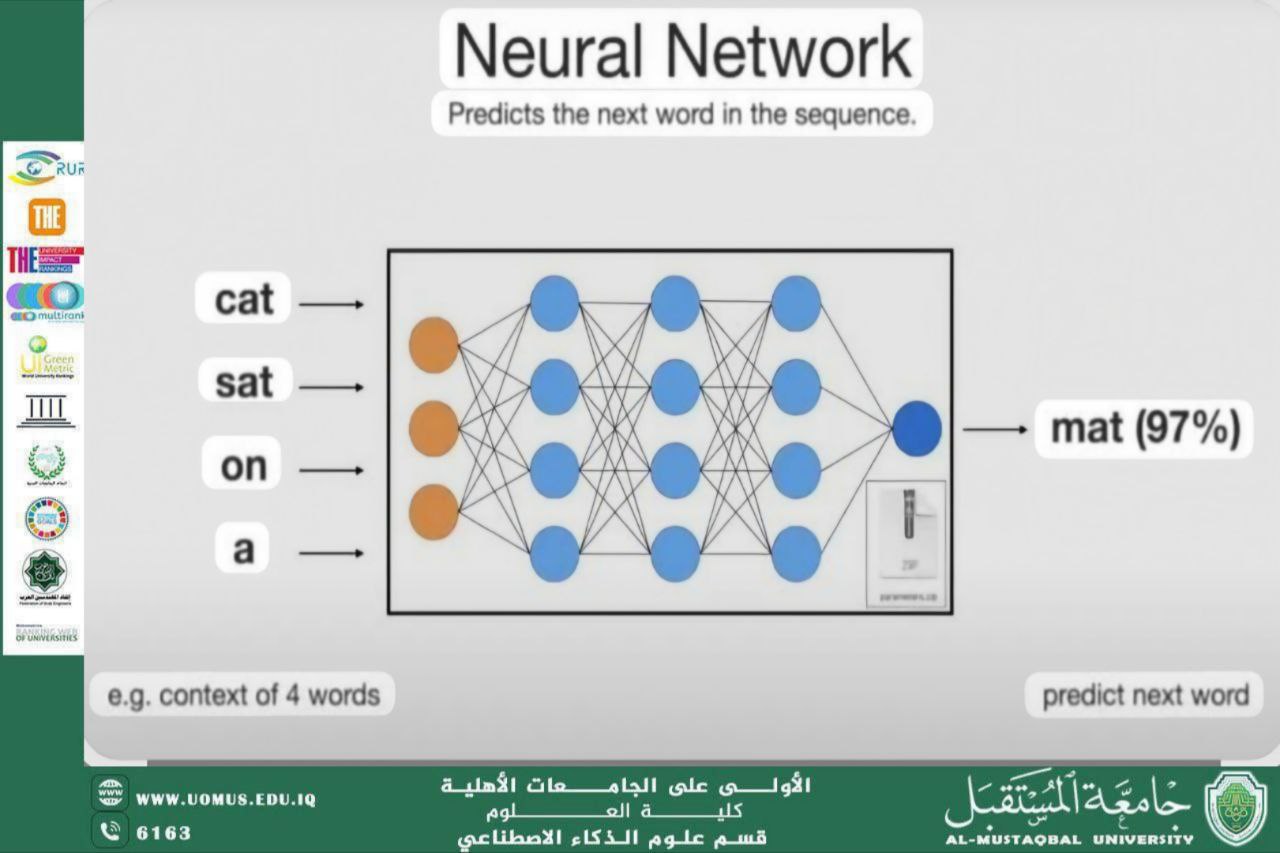

These models are mainly based on deep neural networks, particularly transformer architectures, which enable them to capture context, meaning, and relationships between words and sentences.

LLMs have revolutionized many fields, including machine translation, text analysis, document summarization, question answering, and content generation. In education, they support learning by providing explanations, assisting in curriculum design, and offering academic guidance to students.

In the medical field, large language models help in clinical decision support, medical record analysis, and improving communication between healthcare providers and patients. In business sectors, they enhance customer service, analyze user feedback, and automate administrative tasks.

The future of large language models is moving toward greater accuracy, contextual understanding, and ethical awareness, with reduced bias and improved reliability. They are expected to play a crucial role in scientific research, innovation, and knowledge production, making them a cornerstone of future intelligent societies.